In early 2024, the EU dropped the first comprehensive regulation on artificial intelligence—the EU AI Act. Fast-forward to now, November 2025, and software teams everywhere are sweating the details, because while the Act kicked in last year, August 2026 is when the real difficulties starts for compliance teams. Think of it as the EU's way of saying, "AI is awesome, but let's not let it take over the world." In this guide, we'll guide you through the journey from "What even is this?" to "How do I not get fined?". We'll cover why you're probably an AI provider, how to classify your systems, the obligations (hello checklist), and even a secret weapon to make it less painful.

EU AI Act 2026: Why August 2026 Is the Real Deadline for Software Teams

The EU AI Act is the world's first comprehensive framework for artificial intelligence, aiming to foster innovation while slapping safeguards on risky AI systems. It ensures free circulation of AI across the EU, building trust through competitiveness, and protecting security, safety, and fundamental rights (including personal data). But while the regulation entered into force in August 2024, the high-stakes chapters are coming just in the next few months.

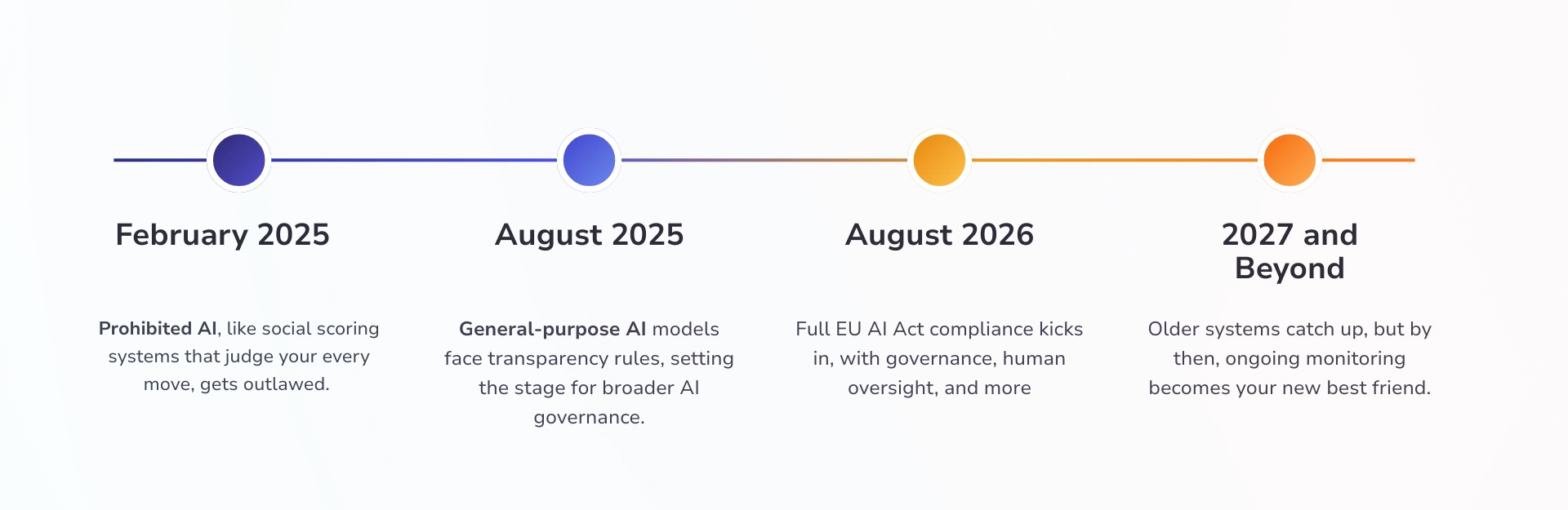

The Phased Rollout Timeline

- February 2025: Prohibited AI, like creepy social scoring systems that judge your every move, gets outlawed. If your AI system flirts with manipulation, it's time to pivot, and fast.

- August 2025: General-purpose AI models face transparency rules, setting the stage for broader AI governance.

- August 2026: This is the big one for high-risk AI systems (think hiring tools or credit scorers). Full EU AI Act compliance kicks in, with governance, human oversight, and more to ensure your artificial intelligence doesn't go rogue.

- 2027 and Beyond: Older systems catch up, but by then, ongoing monitoring becomes your new best friend.

Ignore August 2026 at your peril, as fines could hit up to €35 million or 7% of annual turnover. But fear not; with smart compliance efforts, you'll turn this regulation into a competitive edge.

Are You an AI Provider?

Under the EU AI Act, if you're developing, fine-tuning, or slapping AI systems into your software for the European market, congrats—you're in the club. It's not just Big Tech; even indie devs with clever AI models qualify. The regulation casts a wide net to ensure everyone plays fair in the artificial intelligence game.

Real-World Examples

- Recommendation Engines in E-Commerce SaaS: If it crunches personal data to suggest buys, it might qualify as high-risk AI. Time for governance checks.

- CV-Screening Tools for HR: These often land in high-risk systems territory, demanding transparency to avoid bias blunders.

- Fraud-Detection APIs in Fintech: Accuracy and robustness are key, but one slip and regulatory eyes turn your way.

- Fine-Tuned AI Models Like LLMs in Services: If you're wrapping these for clients, prepare for compliance requirements that touch everything from training data to human oversight.

The moral? Classify early, or risk a plot hole in your compliance efforts come 2026.

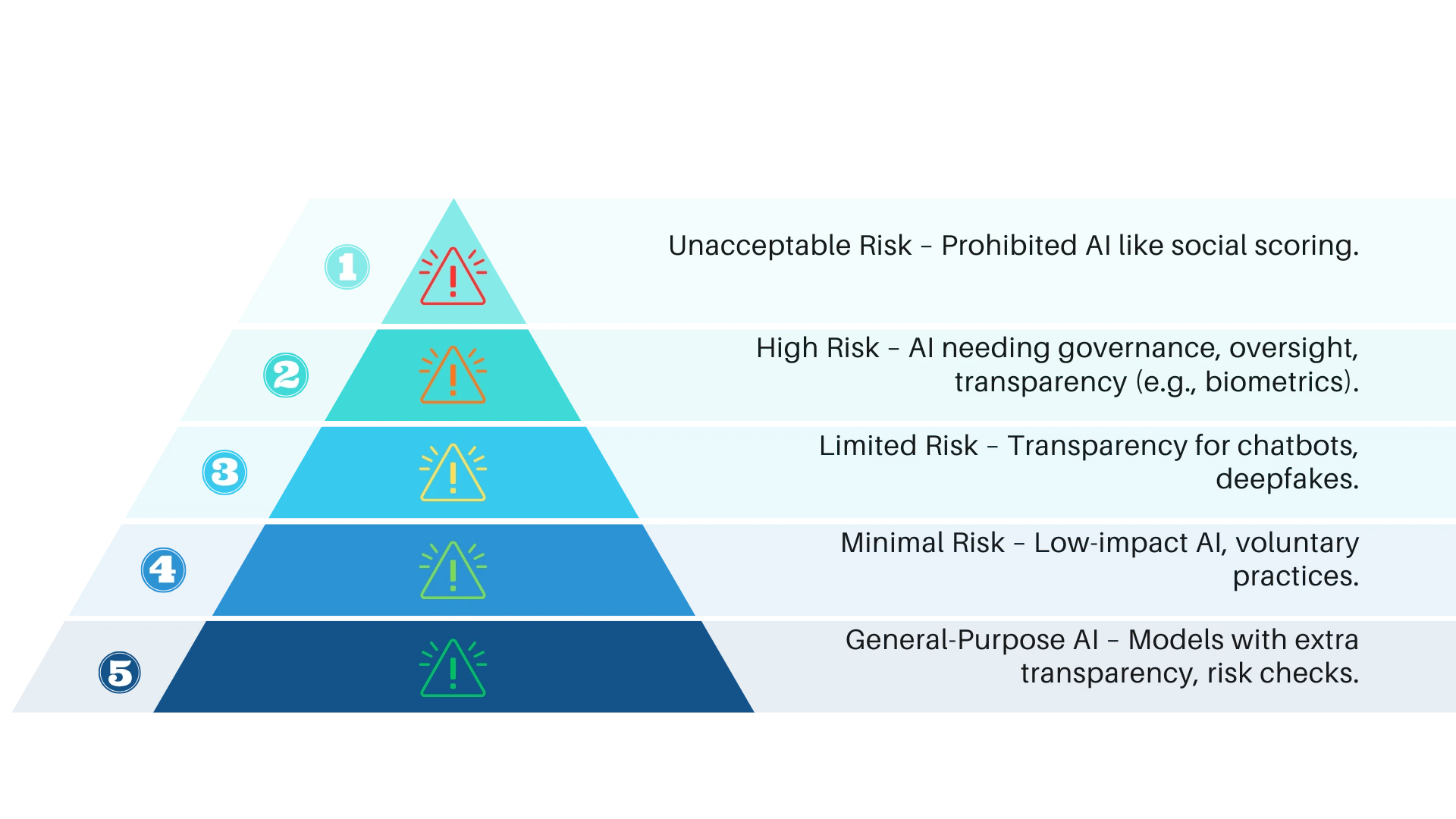

The Four Risk Categories You Must Classify

Now, the rising action: The EU AI Act sorts AI systems into a risk pyramid, like a choose-your-own-adventure book where the wrong path leads to fines. This risk-based approach keeps things proportional—minimal fuss for low-stakes artificial intelligence, ironclad rules for the heavy hitters. Get this right, and your AI governance journey smooths out.

Breaking Down the Pyramid

- Prohibited AI: The Forbidden Zone – Think dystopian stuff like social scoring that manipulates behavior or exploits vulnerabilities. These AI systems are outright banned; if yours smells like this, scrap it.

- High-Risk AI: The High-Stakes Thriller – Systems with big impacts, like biometric ID or educational tools. They demand top-tier compliance requirements, including human oversight and transparency to shield personal data.

- Limited-Risk AI: The Transparency Trap – Chatbots or deepfakes fall here; the focus is on clear labeling so users know they're dealing with artificial intelligence to avoid sneaky surprises.

- Minimal-Risk AI: The Chill Chapter – Everyday tools like spam filters. No mandatory hoops, but voluntary AI governance keeps you ahead of the curve.

Classify your AI models pronto—it's the key to unlocking tailored regulatory paths before August 2026.

The Checklist: Your 2026 Survival Kit

Use this checklist to audit and align. Each obligation includes a deeper dive into what the requirement entails, so no vague legalese here.

Tick these off, and your compliance efforts will shine come 2026.

Limited-Risk, Minimal-Risk, and GPAI

Not all AI systems are high-risk. Limited-risk ones, like your friendly neighborhood chatbot, pivot on transparency—requiring clear disclosures so users know artificial intelligence is at play, especially with personal data in the mix.

- Minimal-Risk AI: These low-key players (e.g., basic filters) have no must-dos, but adopting voluntary AI governance? Smart move for future-proofing.

- GPAI (General-Purpose AI Models): The wildcard, including generative AI, starting in 2025 with transparency basics, escalating in 2026 for systemic risks. If your AI models are large-scale, add evaluations and human oversight to your regulatory toolkit.

Underestimate these, and your compliance requirements could snowball, so it’s probably better to weave them into your AI governance narrative now.

How Brainframe Removes the Pain from EU AI Act Compliance

And now, the happy ending: Enter Brainframe, your assistant in this EU AI Act saga. This governance platform turns compliance chaos into a breeze, automating the heavy lifting for software teams eyeing August 2026. From classifying your AI systems to harmonising human oversight, Brainframe can handle it:

- Risk Classification and Checklists: Easily sort your AI models into categories, flagging prohibited AI pitfalls and high-risk systems early.

- Documentation and Oversight Dashboards: Generate and centralize your technical docs, logs events, and enable human oversight, protecting personal data without the manual grind.

- Conformity Workflows: Streamlines assessments and CE marking, reducing compliance efforts by up to 70% so you can focus on innovating.

- AI Act Compliance Framework: Structure the framework into requirements, link your (potentially existing) controls, policies, and procedures to the requirements without having to start from scratch.

- Audit-ready Proofs: When the auditor shows up inevitably, instead of panicking and spamming your colleagues with email to find the documentation needed, just send all your controls with one click and keep going with your usual work.

Quick Actions You Can Take Today

Don't let August 2026 sneak up like a buggy deployment. Start your EU AI Act compliance today with these bite-sized moves. These quick wins will sharpen your AI governance and get your high-risk AI systems on track without derailing your sprint.

- Classify One AI Model: Grab a coffee, review a single feature (e.g., your recommendation engine), and map it to the risk pyramid—prohibited AI? High-risk? Done in 30 minutes.

- Download the Checklist: Save this post's table as your EU AI Act compliance starter pack; print it and pin it to your team's board for instant reference.

- Run a Mini-Audit: Pick one obligation (like data governance for personal data) and scan your codebase—use free tools like pandas for bias checks.

- Flag Transparency Gaps: Add a quick "AI-powered" label to one user-facing feature to nail limited-risk requirements.

- Book a Brainframe Demo: Jumpstart automated AI governance—schedule a 15-minute call at brainframe.com to see how it handles 2026 deadlines for you.

Small steps today = zero fines tomorrow. Your artificial intelligence (and your CTO) will thank you!